Sysconf 2025 Review

Nov 2025 • 7 min read

On the 8th of November, I attended Sysconf 2025. This was the best Sysconf yet, although I barely had time to enjoy the talks in the last one as I was a speaker.

This time, I was able to attend more talks and had a blast.

I enjoyed some of the sessions so much that I decided to write a quick recap to crystallise my thoughts on each of them. Because the structure of the conference was two sessions happening at the same time, I unfortunately could not watch them all.

The Sysdesign ethos

This was Ayo talking about what SysDesign is and what they stand for. I only caught the tail end, but his talk was valuable beyond understanding what Sysconf/SysDesign is. We should all be learning from first principles, reading and making simple things to learn + grow.

One thing I’ve found very useful (and wish I learned and practiced earlier) is the power of compound effects. When aiming to learn/grow in any area, I’d typically wait until I had time to focus on the one thing, meaning I only grew in one area at a time. It also unfortunately meant that my growth in several long-term areas was hindered because I just never started.

But with slow, consistent growth e.g. committing to one hour of deep focus per day no matter what, I’m able to consistently create, learn and grow. You’d be surprised at how much you can achieve in just one week if you adopt this principle. Now I just need to learn how to take breaks (😅).

Finally, please READ. Read long books. Difficult books. Research papers. Blog posts. Read wide, and read consistently.

The full video is on Youtube.

Whisperer: An Elixir Based Multi Agent Framework

In this talk, Pelumi preached about Elixir and did a pretty good job of convincing us to try it out. The main premise was that building multi-agent systems is already a common pattern in Elixir (and functional programming languages as a whole), making it relevant for building agentic systems. He also spoke a bit about common workflow patterns that serve as a great foundation for designing agentic workflows.

The concept of an LLM for observability actions (MonitorLizard) was interesting to me. I asked about hallucination and he gave a “no bullshit” answer, which I appreciated. While some agentic design patterns try to prevent hallucination, they are not crash-proof. This makes sense as a practical drawback, and can be solved using durable execution frameworks.

You can find the full video on Youtube.

Trustless Federated Learning at Edge Scale

Paul is a researcher that believes the models of the future should be trained on billions of independent devices. One drawback of the way models are trained today is privacy. What if we could have our devices contribute to anonymised learning and prevent big companies from harvesting our data for training purposes?

As is common with research, this was mostly theoretical. It provides a very useful thought experiment about the future of training (and maybe even inference). Full talk is here.

The AI’s Toolbox: Solving the Systems Challenges of a Multi-Tool Data Agent

Zainab spoke about the systems you need to build around an LLM to make it reliable, secure and truly useful for an enterprise product. I enjoy when people speak more about challenges and the thinking behind solutions. It was also not surprising how much MCP was a game-changer for the Decide team.

This talk was also a great example of how research is forever relevant. As a company, you can solve the most complex problems by investing in research. It seems obvious, but it’s not often the reality because of stakeholder requirements, timelines etc. Zainab mentioned that she mostly does the research and hands over to other members of the team, which makes sense. A dedicated engineer is often more feasible for in-depth asks like this.

Another interesting concept explored in this talk was Sandboxing for LLM output. They isolate all the LLM execution in a secure, containerised environment with resource limits and clean state. It reminds me of the bulkhead pattern, which prevents failure in a system from affecting others.

They also use a self-correcting loop to provide resilience and a simple form of reinforcement learning for their LLMs. I was not totally convinced because LLMs can be sneaky. They have been known to “fix” tests by deleting them. But for simple errors, I think a loop with retry guards is sufficient. Ultimately needs more guardrails like human-in-the-loop, LLM-as-a-judge etc.

Watch the full talk on Youtube.

How to Develop Intuition About AI Agents, or Introduction to Graph Theory

In one of my favourite talks of the day, Justin spoke about how to reason about AI agents using graph theory. He went through a detailed introduction to the fundamentals of graph theory, including the mathematical definitions.

The aim of the talk was to give us a mental model of how agents behave by reframing them through the lens of graph theory. This mental model then makes it easier to predict and debug agents’ behaviour. This is a good example of applying fundamental principles to a practical problem.

Graph theory has a lot of usecases, and I encourage you to spend some time learning the fundamentals. Some useful resources:

- Building effective agents - Anthropic

- Full course catalog on Graph Theory

- Another great-looking course

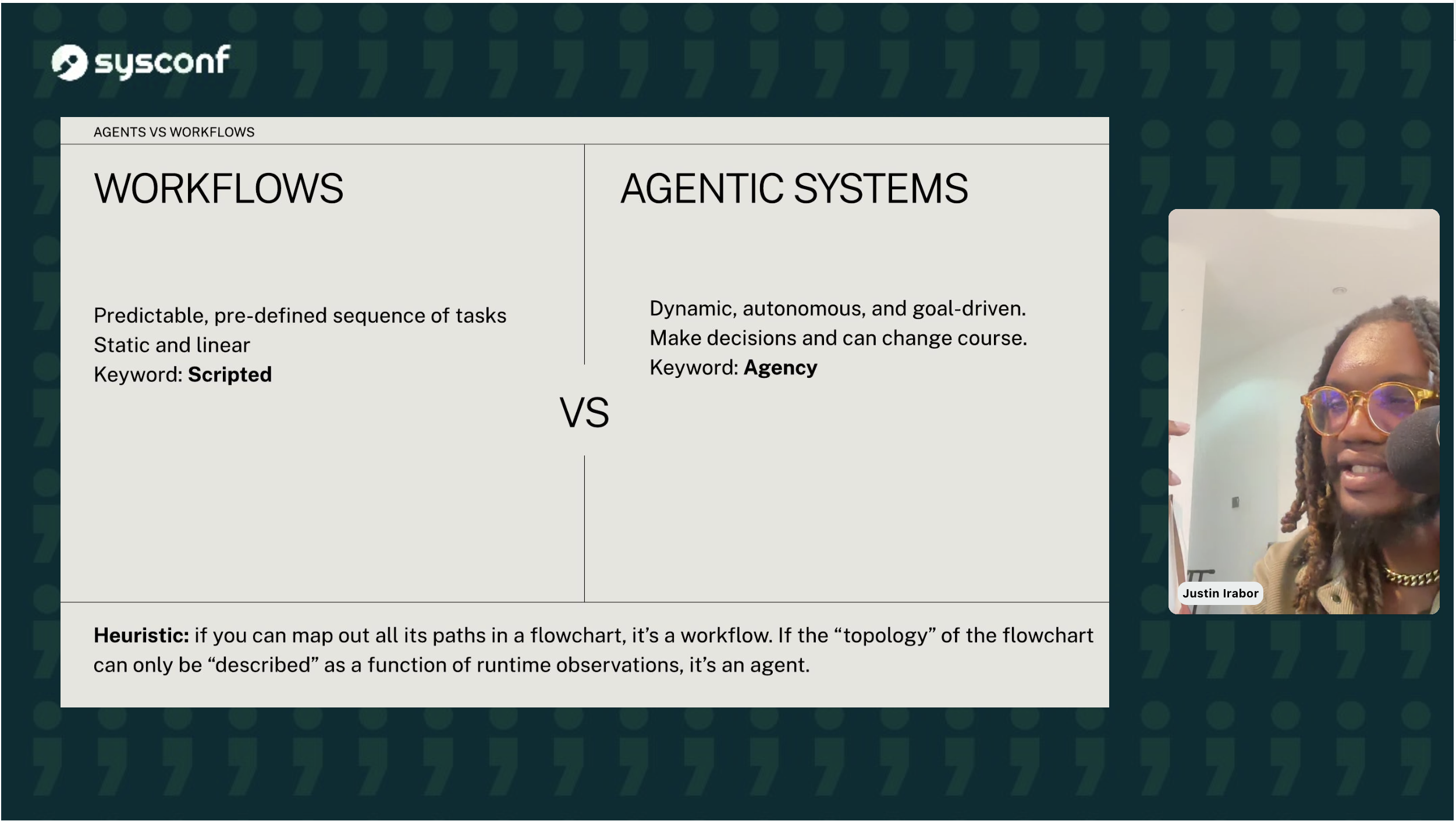

One observation that is probably only relevant to me – he mentioned the difference between workflows and agents being agency. It sounds obvious in hindsight: a workflow is a defined list of steps that need to be executed, while agents can make decisions and execute steps that were not predicted/planned for.

A workflow is generally easier to reason about because it ends up being a DAG (Directed Acyclic Graph) most of the time, while agents will surely have more complex graph patterns. While most of the automation I build is related to workflows, it’s a useful thought experiment for eventually building more complex, interoperable automation with agents.

Watch the full talk on Youtube.

Dead Programs Tell Tales: A Peek at Coredumps

This talk was given by Somtochi, who gave a thorough introduction to coredumps and debugging them. I thought it was very brave to do a live demo of a Coredump debugging session, and it went very well.

Like monitoring, Coredump debugging is not a commonly-referenced topic until it is needed for troubleshooting. It is good to practice debugging before you need it, and to understand the fundamentals of what you’re doing when troubleshooting. Somtochi does a fantastic job of providing all of this information, so watch the talk.

I had some extra thoughts about the sensitivity of coredumps and the dangers of taking coredumps from a running process, which were covered in the QA section. If you’re interested in how to reason about coredumps in NodeJS, read How good is your memory? on this blog.

Watch the full talk on Youtube.

A Panel on Technical Leadership

This was a panel on technical leadership with five engineering leaders. The main takeaways for me were about excellence and doing great work. They also gave a lot of useful advice about promotions and accountability, but it all ultimately boiled down to excellence.

I’m curious what more junior people learned from this talk, so feel free to reach out or mention the Sysconf team in a tweet!

Watch the full talk on Youtube.

Forget the 1 Billion Rows Challenge, Let’s Solve The I, Zombie Endless

This was my last talk of the day so I was a bit distracted. It was so good though that I’ve already rewatched on Youtube. Fanan provides a great introduction to the fundamentals of concurrency in Go, and how to simplify overwhelming problems.

The talk goes through solving a challenging problem with great fundamentals, which fit really well into the theme of the day. Other attendees also seemed very tuned-in and engaged.

See full video on Youtube.

Conclusions

It was a long day and there are several talks that I missed entirely (or most of):

- What Makes It Go Brrrrr? An Introduction to the Inner Workings of LLM Inference Engines by Habeeb Shopeju

- Networking Stalemates: An Insider View to CLOSE_WAIT Sockets by Emmanuel Bakare

- How We Handle Data Encryption at InfraRed by Allen Akinkunle

All the videos are available on Youtube, so I’ll try to catch them there. I also spent very little time networking, so if you want to have a chat about anything, feel free to reach out! I spend a lot of time thinking about research, SRE, Youtube and machine-learning these days. Any of these topics is a great way to get my attention.

See you at Sysconf ‘26!

To help me grow on Youtube and learn about Monitoring, Machine Learning, and SRE, please subscribe here.

And to get notifiied about new posts, please subscribe here.